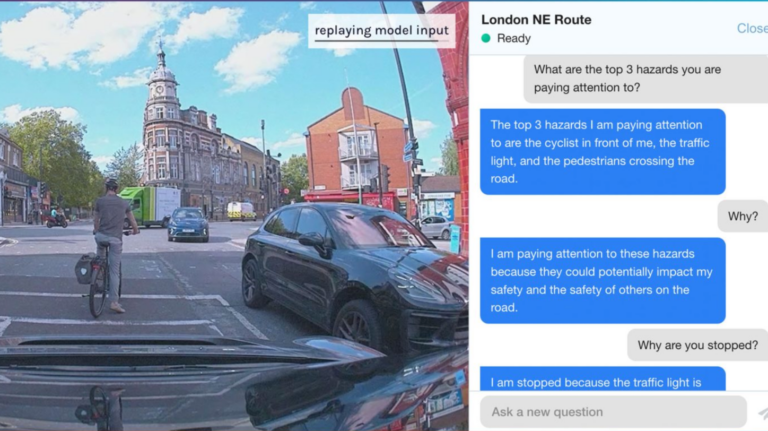

A vision-language-action model (VLAM) has been developed to enable artificially intelligent (AI) driving software to provide the reasoning behind its actions while self-driving.

The new solution, named Lingo-1, was developed by self-driving technology firm Wayve to offer deeper insight into the decision-making and reasoning capabilities of its AI models.

This, the company has claimed, is critical to ensuring it can build a safe driving AI for self-driving. The new model was designed to enhance the interpretability of its current AI Driver.

Training the VLAM was done using data from the company’s drivers, with commentary from them informing Lingo-1 so it can explain its reasoning using natural language.

The intention behind this is to enable people to better understand the decision-making capabilities of the AI driving technology.

In addition to commentary, Lingo-1 can respond to questions about some of its actions while driving.

By incorporating natural language sources, such as the Highway Code and other safety-relevant content, the model can reportedly make retraining its AI models more straightforward.

By improving the raw intelligence of its AI Driver, Wayve can accelerate the learning process, enhance accuracy, and increase the technology’s capacity to handle diverse driving tasks.

Alex Kendall, co-founder and CEO of Wayve, said: “Wayve is currently testing its self-driving technology daily on UK roads and is undertaking Europe’s largest last-mile autonomous grocery delivery trial with Asda, benefitting 170,000 residents across 72,000 households in London.

“Developments like Lingo-1 will help make the commercial deployment of self-driving vehicles a reality. Explaining decisions in natural language will transform the learning and explainability of self-driving technology…Lingo-1 opens up many possibilities for self-driving, improving the intelligence of our end-to-end AI Driver as well as bridging the gap of public trust – and this is just the beginning of maximising its potential.”

Wayve is a finalist for the first-ever Robotics & Automation Awards in the Innovation in Transportation category for its work on autonomous deliveries with Asda. Interested in attending this unmissable event for the robotics and automation sectors? Book your table now!